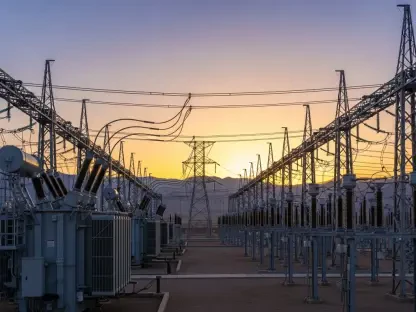

The relentless expansion of artificial intelligence has moved beyond the digital vacuum of code into a physical confrontation with the aging infrastructure of the American electrical system. While software engineers celebrate the latest breakthroughs in large language models and neural networks, a silent crisis is brewing beneath the floorboards of the nation’s data centers. The United States is currently attempting to host a 21st-century technological revolution on a mid-20th-century electrical framework. This disconnect creates a high-stakes paradox where the most sophisticated code in human history is only as powerful as the overtaxed transformers and aging copper wires that deliver its lifeblood.

The global race for intelligence operates on the dangerous assumption that the flick of a switch will always result in limitless power. However, the reality of physical infrastructure is beginning to impose a hard limit on digital dreams. Without a radical overhaul of how energy is consumed and generated, the very intelligence designed to solve humanity’s greatest problems might find itself paralyzed by a simple lack of electricity. This bottleneck represents the invisible ceiling of the intelligent age, a point where the speed of innovation meets the inertia of physical reality.

The Collision of Digital Velocity and Physical Inertia

To grasp the gravity of this situation, one must look at the unprecedented scale of the energy surge currently unfolding across the continent. U.S. data center power demand is projected to climb by double digits annually through 2028, with expectations that total consumption will nearly triple by the decade’s end. This is not a mere incremental increase; it represents a fundamental shift in the national energy profile. Unlike traditional cloud storage, which primarily handles data at rest, generative AI workloads require relentless and high-intensity compute cycles that burn through electricity at a staggering rate.

These processes demand more servers, increasingly advanced liquid cooling systems, and a level of electrical consistency that the current grid was never designed to provide. The “Intelligent Age” is effectively running into a wall of physical reality that no software update can bypass. As more organizations integrate AI into their core operations, the demand for “always-on” power has become non-negotiable. This relentless thirst for energy is forcing a confrontation between the rapid pace of the tech sector and the historically slow-moving development cycles of the energy industry.

Structural Bottlenecks and the Breaking Point of Public Utilities

The strain on the American electrical system has transitioned from a theoretical concern for engineers into a tangible economic threat. PJM Interconnection, the largest grid operator in the nation, currently serves as a bellwether for this looming instability. The rapid clustering of data centers in specific regions is pushing generation capacity to its absolute limits, raising significant concerns about grid failure during extreme weather events. When the grid reaches its peak, the competition for power between a massive AI cluster and a local residential neighborhood becomes a zero-sum game.

Beyond immediate capacity issues, a secondary crisis exists in the form of interconnection queues. Permitting delays, material shortages, and a scarcity of skilled labor mean that new energy projects often languish for years before they can connect to the grid. This logistical logjam creates a scenario where the financial burden of rapid expansion often trickles down to household utility rates. Consequently, technological growth is increasingly viewed as a competitor for public affordability, creating a volatile political environment where the expansion of AI is seen as a direct threat to the stability of local communities.

Expert Perspectives on the Bring Your Own Power Mandate

Energy leaders, including Ameresco CEO George Sakellaris, suggest that the era of the passive utility model has come to an end. Relying solely on a standard utility line for a multi-billion-dollar AI facility is now considered a high-risk strategy that exposes companies to catastrophic downtime and lost revenue. In a landscape where every millisecond of compute time equates to a competitive advantage, the unpredictability of the public grid becomes an unacceptable liability. Data center operators are beginning to realize that the only way to ensure their future is to take control of their own supply.

Analysts argue that data centers must be treated with the same urgency as hospitals or military installations. These critical institutions do not assume the grid will always be functional; they build with an inherent expectation of self-sufficiency. If the United States does not pivot its energy strategy to accommodate this mindset, it risks a self-inflicted constraint on national competitiveness. If power becomes the primary bottleneck for innovation, investment will inevitably migrate toward regions with more flexible energy policies and a more resilient electrical infrastructure.

Strategies for Building a Self-Contained Energy Ecosystem

For the AI revolution to continue its trajectory, developers are transitioning from being energy consumers to becoming active energy producers. This “Bring Your Own Power” framework involves deploying on-site distributed generation, such as advanced natural gas turbines or small modular reactors, located directly on the property. By bypassing the traditional grid for their primary needs, these facilities can insulate themselves from external instability and reduce the pressure on public resources. This shift represents a move toward a more decentralized and resilient energy architecture.

Furthermore, the integration of advanced microgrids and sophisticated battery storage allows these digital fortresses to operate independently during periods of peak demand. This shift changes the fundamental relationship between tech giants and power providers. In this emerging model, the public grid acts as a coordinator and provider of baseline connectivity, while the data centers provide their own distributed resiliency to manage massive, fluctuating compute loads. This synergy is essential for maintaining the uptime required by the next generation of neural networks.

The realization that the AI boom was tethered to the energy sector prompted a fundamental shift in industrial planning. Policymakers recognized that maintaining national leadership required a departure from centralized energy reliance toward a more fragmented, resilient model. This evolution encouraged the adoption of localized nuclear power and hydrogen fuel cells as viable alternatives for massive compute clusters. As a result, the strategy of energy independence for data centers transformed from an experimental luxury into a mandatory standard for survival. Those who integrated renewable generation with on-site storage successfully decoupled their growth from the limitations of the aging public infrastructure. This proactive approach not only secured the reliability of the intelligent age but also alleviated the pressure on the national grid, ensuring that the technological revolution remained sustainable for all sectors of society.