The 160-Gigawatt Question: Are We Really Running Out of Power?

The humming silence of a data center floor or the sudden chill of a failing furnace during a winter storm serves as a stark reminder of the absolute dependence on a stable flow of electrons across the continent. Headlines grow increasingly dire: aging power plants are retiring, data centers are consuming electricity at an unprecedented rate, and the American power grid is supposedly teetering on the edge of a systemic collapse. For the average citizen, this translates to a singular fear regarding whether the lights will stay on when the next heatwave or winter storm hits. While the North American Electric Reliability Corp. (NERC) warns of a high-risk environment characterized by imminent shortages, a growing chorus of energy experts suggests that the crisis might be more a product of conservative modeling than a physical lack of resources.

The tension between these perspectives reveals a fundamental disagreement over how to measure the strength of modern infrastructure. NERC’s assessment suggests a high-risk environment characterized by potential power shortages, yet critics argue that these risks are significantly overstated. The primary disagreement hinges on three critical variables: the speed of load growth from data centers, the realistic rate of new power generation additions, and the role of interregional electricity sharing during peak demand periods. If the current regulatory outlook is an accurate reflection of an impending crisis, the implications for energy policy are vast. However, if the projections are overly conservative, the nation might be bracing for a disaster that is statistically unlikely to occur.

Decoding the Reliability Debate: Why the Forecast Matters

Understanding the stability of the US power grid is not just an academic exercise; it carries profound political and economic consequences. The projections released in the Long-Term Reliability Assessment serve as the primary roadmap for federal policymakers and grid operators. If these reports signal a crisis, they can be used to justify delaying the retirement of fossil fuel plants or fast-tracking conventional energy projects. These findings have already become a rhetorical tool for proponents of traditional energy, who use the reliability crisis narrative to advocate for a return to older, carbon-intensive generation methods as a safety measure.

Conversely, if the risks are overstated, the country may miss opportunities to transition toward a more flexible, modern energy system. At its core, the debate centers on whether the grid is facing a genuine energy deficit or simply a planning evolution. Federal regulators have characterized certain reports as a call to action, warning that adhering to the status quo would inevitably lead to a national energy crisis. Yet, the choice of which data to prioritize determines whether billions of dollars are invested in extending the life of old plants or building the transmission lines of the future.

Analyzing the Drivers of Grid Stress and Potential Overestimations

NERC projects a massive 160 GW demand increase by 2030, with over half driven by artificial intelligence and data infrastructure. This surge in demand is often cited as the primary reason for the precarious state of the grid. However, global shortages of power transformers and advanced AI chips act as natural speed bumps that may delay the very demand spikes that regulators fear. If the hardware required to build these massive data centers cannot be procured at the projected rate, the actual demand on the grid will rise much slower than the current models anticipate.

Furthermore, current forecasting methods often fail to account for overlapping load requests, where multiple developers may request power for the same regional capacity, leading to inflated growth projections. This risk of double-counting creates a skewed version of reality where every proposed project is assumed to be completed simultaneously. Emerging doubts on Wall Street regarding the long-term returns on AI investments could also lead to a cooling of the aggressive construction pace currently predicted. By updating these forecasts to align with realistic chip-industry growth rates, the perceived reliability gap in most regions might look significantly less threatening.

The Hidden Reservoir: Underestimated Supply and Interregional Support

Thousands of wind, solar, and battery storage projects are currently stuck in regulatory study phases; NERC’s conservative counting often ignores these resources until they have final agreements. Research from the Lawrence Berkeley National Laboratory suggests there is significantly more accredited capacity ready to connect than official assessments acknowledge. By failing to account for these likely-to-connect resources, regulators create a low-generation scenario that makes the grid appear more fragile than it is in reality. The interconnection queue represents a massive pipeline of energy that is often left out of the most pessimistic reliability equations.

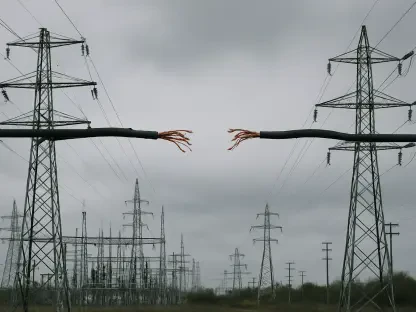

Another point of contention involves firm versus non-firm power transfers between different geographical regions. While regulators often only count guaranteed power contracts, history shows that regions frequently share massive amounts of emergency power during crises. Historical data from the Energy Information Administration demonstrates that the grid is far more interconnected and resilient during peak demand than rigid worst-case models suggest. During extreme weather events, regions have successfully imported gigawatts of power to stabilize their systems, proving that the collective strength of the interconnected grid is greater than the sum of its isolated parts.

Strategies for a More Resilient and Transparent Energy Future

Streamlining the interconnection process became a priority, which reduced the bureaucratic backlog and allowed renewable energy projects to move from the queue to the grid faster. This transition required a fundamental shift in how regulators viewed the pipeline of new projects, recognizing that speed was as vital as stability. Investing in interregional transmission proved necessary to build the physical superhighways required to move electricity across state lines during localized weather extremes. These physical connections served as a primary defense against the unpredictability of climate-driven demand spikes.

Adopting probabilistic planning shifted the industry from worst-case scenario modeling toward more realistic, data-driven forecasting that accounted for technological trends and market dynamics. This approach allowed operators to prepare for likely events rather than being paralyzed by extreme outliers. Modernizing load data collection improved transparency in how emerging industries, such as AI, reported their energy needs. This clarity prevented the redundant or inaccurate demand projections that previously fueled narratives of collapse. Ultimately, the grid evolved through better planning and updated data, ensuring that the physical system remained capable of meeting the needs of a changing economy.