As New York City navigates an ambitious transition toward a carbon-neutral future, the friction between aging infrastructure and rapid renewable deployment has reached a critical boiling point. Christopher Hailstone joins us to dissect this tension, drawing on his deep background in energy management and utility regulation to explain why the city’s power grid is currently at a crossroads. With a career dedicated to ensuring grid reliability, he offers a unique perspective on the regulatory battles currently shaping the landscape for battery storage and electricity delivery in one of the world’s most complex urban environments.

The conversation explores the dramatic financial shifts caused by new utility testing protocols, the technical challenges of integrating a massive surge in storage projects, and the looming reliability gaps as traditional fossil fuel plants are retired. We also delve into the strategic differences between localized distribution projects and large-scale transmission installations, looking for a path forward that balances aggressive clean energy goals with the fundamental need for a stable, affordable power supply.

Interconnection costs for certain battery projects have recently surged by an average of 14 times, reaching upwards of $21 million per site. How does a two-part reliability test specifically trigger these massive financial shifts, and what practical steps can developers take to navigate these new cost structures?

The financial “sticker shock” we are seeing is a direct result of the utility shifting how it calculates the impact of storage on the local grid. When the “two-part test” was introduced, it moved the goalposts by checking if a project would create a new peak at an area station or exceed a 70% reliability threshold, which effectively forces developers to pay for massive system-wide upgrades that were previously unnecessary. In practice, 34 projects totaling 161 MW were hit with these unsolicited re-studies, leading to that staggering $21 million average increase per site. For developers, navigating this means moving away from a “plugin and play” mindset and toward more sophisticated modeling that proves their system won’t inadvertently trigger these new peaks. It requires a much deeper level of collaboration with utility engineers early in the process to ensure that the proposed discharge and charge cycles don’t push a substation past that 70% threshold, which is where the heaviest costs are currently being assessed.

With approximately 85% of specific service territories currently restricted for distributed energy storage and dozens of substations reaching capacity limits, what specific grid infrastructure improvements are most urgent? How can planners prioritize these upgrades while maintaining energy affordability for the broader customer base?

The most urgent need is addressing the 20 out of 63 substations that are currently at or near their hosting capacity limits, which has effectively gridlocked 85% of the service territory for new storage projects. We need to focus on sub-transmission upgrades and “smart” monitoring equipment that allows for more dynamic management of power flows, rather than just building bigger, more expensive physical transformers. Planners have to be incredibly strategic because the grid was originally built to serve customer load, not to host massive batteries at any scale or location. To keep things affordable, the utility is looking for projects that provide real economic value by deferring billion-dollar infrastructure upgrades rather than projects that just seek to maximize developer profits. By prioritizing upgrades in the 10 substations where 65% of the queue is currently clustered, we can unlock the most capacity for the lowest relative cost to the ratepayer.

Concerns have surfaced that charging batteries during off-peak hours may create new overnight load peaks that exceed current daytime maximums. What technical strategies or smart-charging protocols can prevent these secondary peaks, and how should these solutions be integrated into current interconnection study methodologies?

This is a classic case of unintended consequences, where a solution for daytime peaks creates a new crisis at 3:00 AM when the wind is blowing and batteries are thirsting for cheap power. With the project queue surging 300% to 2.5 GW—which represents about 25% of the city’s total peak load—the risk of creating a new overnight “super-peak” is very real. We need to implement automated demand-side management protocols that stagger charging times based on real-time substation health rather than just a fixed clock. These protocols should be baked into the interconnection studies as “non-firm” agreements, where the utility has the right to throttle charging if a secondary peak starts to form. By integrating these dynamic limits into the study methodology, we can allow more batteries onto the grid without needing to overbuild the physical infrastructure for a peak that only exists for a few hours a night.

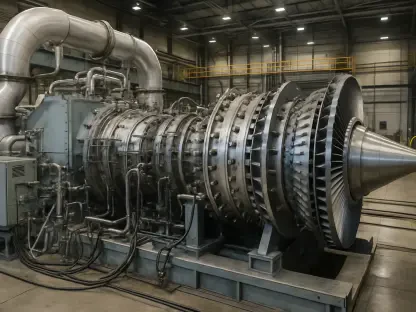

As aging fossil fuel plants deactivate, urban centers face potential energy shortages within the next several years despite new renewable projects. How do distribution-level storage projects compare to transmission-scale installations in addressing this specific reliability gap, and what are the primary trade-offs in their deployment timelines?

We are facing a very tight deadline, with the NYISO warning of a 148 MW shortage by 2030 as we retire 672 MW of aging fossil fuel capacity. Transmission-scale projects, like the 842-MW Arthur Kill site, are heavy hitters that can replace those retiring plants more directly, but they often face grueling multi-year timelines and complex permitting. In contrast, distribution-level projects under 5 MW can theoretically be deployed much faster and closer to where the power is actually used, providing a “neighborhood-level” safety net. The trade-off is that these smaller projects are currently the ones getting bogged down in the 85% restricted territory and the $21 million upgrade costs. While transmission-scale projects have been hailed as excellent partners by the utility, the smaller, distributed projects are the ones that could provide immediate relief if we can solve the current interconnection gridlock.

The current project queue has surged by 300% in a short window, yet many projects are clustered in areas where land and zoning are most affordable. How can regulators balance the developer’s need for cost-effective siting with the utility’s requirement to avoid overloading specific local substations?

This is the central conflict of “efficient siting” versus “cheap siting,” where 65% of the storage capacity in the queue is trying to squeeze into just 10 substations because that’s where the land is cheapest. Regulators need to move toward a more transparent, map-based incentive system that clearly signals where the grid actually needs power, rather than letting developers find the cheapest vacant lot and hoping the grid can handle it. If we provide higher credits for projects that site in “high-value” areas—even if the land is more expensive—we can naturally steer development away from those 10 overloaded substations. This balancing act requires moving away from a first-come, first-served queue and toward a “best-fit” model that rewards projects for helping the grid maintain reliability. It’s about creating a pricing signal that reflects the true cost of the infrastructure required to support a project at a specific location.

What is your forecast for the future of distributed energy storage in New York City?

My forecast is that we are entering a “correction year” where the explosive 300% growth in the queue will be met with a much more disciplined, utility-driven deployment strategy. While the current emergency petition to roll back the “two-part test” highlights the friction, the long-term reality is that we will see a shift toward a comprehensive portfolio of solutions, including more sophisticated demand-side management and non-emitting resources. By this summer, as the state regulators review the portfolio of solutions for the 2030 reliability gap, I expect we will see a new framework that moves away from the 14-fold cost increases in favor of shared infrastructure costs. Distributed storage will remain the backbone of the city’s reliability, but only if we can successfully transition from an era of “unrestricted hosting” to a model where batteries are actively managed as a dynamic part of the utility’s own reliability toolkit.