As a veteran of the energy sector with a deep focus on grid reliability and the evolving mechanics of electricity delivery, Christopher Hailstone brings a seasoned perspective to the current crossroads facing American utilities. With years spent navigating the intersection of renewable integration and aging infrastructure, he has become a go-to voice for understanding how regional markets succeed or fail in the face of unprecedented demand. This conversation centers on the strategic pivot away from traditional regional grid operators, the massive capital requirements driven by the data center boom, and the sophisticated financial maneuvers required to shield residential consumers from the rising costs of a modernized grid.

The following discussion explores the friction between utility speed and regulatory inertia, particularly regarding the slow pace of generation interconnection in major markets. We examine the rise of the “genco” model as a tactical workaround for industrial load and the delicate balancing act of managing a $78 billion capital plan while mitigating the environmental liabilities of a legacy coal fleet.

Some major utilities are weighing an exit from regional grid operators like PJM and SPP due to slow interconnection speeds. How would a utility transition to “alternative structures,” and what specific performance metrics must improve for a grid operator to retain its members?

Transitioning to alternative structures is a high-stakes maneuver that involves re-evaluating how a utility interacts with the wholesale market, potentially shifting toward more localized control or bilateral agreements. To pull this off, a utility has to meticulously decouple its transmission assets from the regional operator’s control, a process fraught with regulatory hurdles and the need for new reliability coordination. The frustration we are seeing stems from the “fits and starts” of government-led reforms that simply aren’t moving the needle fast enough for companies trying to hook up new power. For a grid operator to retain its members, they must demonstrate a radical improvement in the speed of their stakeholder approval process and the actual physical connection of generation assets. We are looking for a measurable reduction in the time it takes to move a project from the queue to energized status, because right now, the slow pace is a direct threat to meeting the surging 190 GW pipeline of active projects.

With 63 GW of contracted large load arriving by 2030, mostly from data centers, how do you balance a $78 billion capital plan with the goal of limiting residential rate increases? What trade-offs are required when prioritizing these massive projects over traditional distribution upgrades?

The scale of the $78 billion capital plan is staggering, but the key to protecting residential ratepayers lies in the sheer volume of that 63 GW contracted load, which has jumped 13% in just three months. By integrating these massive data center projects, we can generate up to $16 billion in “offsets,” which effectively allows large industrial users to shoulder a heavier portion of the utility’s fixed costs. This strategy is what allows us to project a manageable 3.5% average annual rate increase for residential customers despite the massive infrastructure spend. However, the trade-off is intense; it means prioritizing $33 billion in transmission and $24 billion in generation projects that serve these high-demand clusters over some of the more localized distribution upgrades. There is a palpable tension in the boardrooms as we ensure that the $17 billion allocated for distribution is enough to maintain the local wires while we chase the massive growth in the commercial and industrial sectors.

There is a growing interest in adopting “genco” models to serve large industrial loads in specific states. What are the operational steps to implement this non-utility structure, and how does it help bypass the current interconnection bottlenecks seen in traditional regional transmission markets?

Implementing a “genco” model, particularly in states like West Virginia, involves carving out generation assets into an unregulated entity that can operate outside the traditional rate-based utility framework. This requires a complex legal separation of assets and the establishment of new power purchase agreements that link the generation directly to the industrial load. By doing this, a utility can participate in reliability backstop auctions and bypass the standard PJM or SPP interconnection queues, which are currently bogged down by bureaucracy. It feels like finding a pressure valve in a system that is about to burst; it allows for a more agile response to customer needs without being tethered to the slow-moving stakeholder processes of the regional operators. This non-utility structure is essentially a workaround that provides the speed and flexibility that data center developers are screaming for in today’s market.

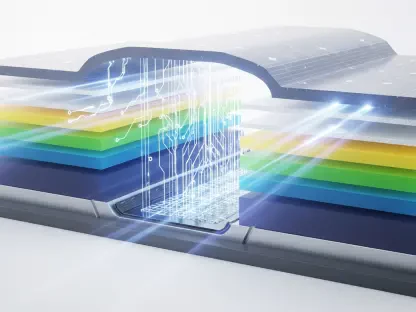

Expanding 765-kV transmission projects while managing over 10 GW of coal-fired capacity requires significant capital. How do you mitigate the financial risks of environmental mandates, such as groundwater treatment costs, while simultaneously funding a $33 billion transmission expansion across multiple states?

Managing the financial risk of a 10.2 GW coal-fired fleet is like walking a tightrope while the wind is picking up, especially with looming mandates for groundwater treatment and ash removal. These environmental costs are “substantial” and could easily derail cash flows if not managed with extreme caution and proactive legal strategy. We mitigate this by aggressively pursuing rate increases and transmission investments that provide a steady return, while also looking for “gains related to renewable contract terminations” to bolster the bottom line. The $33 billion transmission expansion, including flagship efforts like the 765-kV Piketon project in Ohio, is actually a defensive move; it diversifies the asset base away from legacy coal. By shifting the weight of the company toward high-voltage transmission, we create a more resilient financial profile that can better absorb the “material and adverse” hits that might come from environmental regulatory shifts.

Meeting a $47 billion operating cash flow target involves using hybrid instruments and structured financing. Can you walk through the step-by-step process of selecting these financial vehicles and explain how they support a “growth equity” strategy during periods of high interest rates?

Selecting the right financial vehicles in a high-interest-rate environment requires a “full range of tools” approach, starting with a rigorous analysis of the cost of capital versus the projected returns on our $77.9 billion expenditure plan. First, we identify projects with the most certain regulatory recovery, then we layer in hybrid instruments—which offer equity-like characteristics without the same level of dilution—to maintain our credit ratings. Structured financing is then used to ring-fence specific assets, like new gas-fired generation in Indiana, allowing us to tap into specialized pools of capital. This “growth equity” strategy is designed to keep the engine humming even when borrowing costs are high, ensuring we hit that $47 billion cash flow target over the five-year period. It’s a sophisticated game of financial Tetris where every piece, from first-quarter incomes of $874 million to “equity-like” instruments, must fit perfectly to fund the expansion without over-leveraging the balance sheet.

Retail sales are surging in the commercial and industrial sectors even as residential demand fluctuates. What infrastructure investments are most critical to support this 13.6% growth in industrial load, and how do these projects produce offsets that benefit the broader customer base?

The 13.6% surge in industrial and commercial sales is the primary engine of growth right now, and it necessitates a laser focus on high-capacity infrastructure like 765-kV lines and robust gas-fired generation. Projects like the Piketon transmission initiative and the fuel cell initiatives in Wyoming are critical because they provide the “backbone” necessary to support the immense power draws of modern data centers. These investments are the very things that produce the $16 billion in offsets I mentioned earlier; as these large customers plug into the new 765-kV lines, they pay into the system’s fixed costs, which effectively subsidizes the grid for everyone else. It’s a symbiotic relationship where the industrial boom pays for the very modernization that keeps residential rates from skyrocketing. Even when residential sales dip due to weather conditions—as we saw with a 6.2% decline recently—the industrial side provides a reliable, growing floor for our total retail sales.

What is your forecast for the future of regional transmission organizations?

I forecast a period of intense fragmentation where the traditional, all-encompassing power of organizations like PJM and SPP will be challenged by utilities seeking more “responsive” and “effective” localized models. While these regional operators won’t disappear, we will likely see a hybrid future where “genco” structures and unregulated generation play a much larger role in bypassing the bottlenecks of the standard interconnection queue. The pressure from 63 GW of contracted load is simply too great for the status quo to hold; if these grid operators do not modernize their stakeholder processes within the next three to five years, we will see a significant migration toward independent, utility-led transmission corridors. Ultimately, the grid of the future will be defined by whoever can move at the speed of the data center industry, and right now, the utilities are the ones showing the most urgency to cross that finish line.