The silent hum of the American power grid is rapidly transforming into a frantic roar as the nation grapples with a sudden surge in electricity demand that threatens to outpace the existing energy infrastructure. After decades of relatively flat growth, the United States is staring down a massive 128-gigawatt shortfall that must be addressed within the next five years. This is not a distant concern for a future generation; it is an immediate crisis generated by the exponential growth of artificial intelligence data centers, a massive domestic manufacturing resurgence, and the widespread electrification of heavy industry. The traditional model of building centralized power plants and waiting a decade for transmission lines to connect them is no longer a viable strategy for an economy that requires power today, not in the next decade.

As the “time to power” becomes the primary metric of economic success, the rigid structures of the 20th-century grid are proving to be its greatest liability. Developing a single high-voltage transmission line often involves a labyrinth of multi-state permitting and environmental reviews that can stall projects for years. In the current landscape, industries are moving faster than the utilities that serve them, leading to a disconnect where economic expansion is being throttled by an inability to plug into the wall. Consequently, the focus is shifting toward more agile, localized solutions that can be deployed on the ground in a fraction of the time required for traditional infrastructure.

The 128-Gigawatt Hurdle: Why the U.S. Power Grid Is at a Breaking Point

The scale of the current energy challenge is nearly unprecedented in the history of American utilities, as the system must now find a way to integrate 128 gigawatts of new capacity in record time. This sudden demand is primarily driven by the massive power requirements of hyper-scale data centers, which consume electricity at a rate that can overwhelm local distribution networks. At the same time, the push to bring manufacturing back to American soil has led to the construction of massive gigafactories and industrial hubs that require constant, high-intensity energy feeds. This combined pressure has created a situation where the grid is being asked to do more than it was ever designed to handle, leading to increased risks of instability.

The transition from a fuel-based economy to an electricity-based one has moved from a theoretical policy goal to a concrete physical reality. As sectors ranging from home heating to industrial processing switch to electric power, the load profiles that utilities once relied upon are becoming obsolete. The problem is not just the total amount of energy needed but the speed at which this new load is appearing on the system. Because traditional energy projects are slow to materialize, the gap between what the economy needs and what the grid can provide is widening, creating a bottleneck that threatens to stifle regional growth and national competitiveness.

The Cracks in the Traditional Utility Playbook

For nearly a century, the utility industry has relied on a business model centered around massive capital expenditures on centralized infrastructure. This system was designed to handle the absolute peak hours of the year—those few scorching summer afternoons or freezing winter nights when demand is highest—meaning that for the vast majority of the year, billions of dollars in equipment sit idle. This “build for the peak” philosophy has become increasingly inefficient and expensive, as consumers are left paying for a vast network of wires and plants that are rarely used to their full potential. The traditional playbook assumes that the only way to ensure reliability is to build more of the same, regardless of the cost or the time required.

Evidence of this systemic breakdown is most visible in the interconnection queues of major grid operators like the PJM Interconnection. In these regions, new energy projects are frequently told they must wait an average of eight years just to receive permission to connect to the grid. This backlog represents a catastrophic failure of the current system to adapt to the needs of a modern economy. When a business can build a factory in two years but cannot get power for eight, the traditional utility model is no longer serving its purpose. This disconnect is driving up electricity rates as utilities pass on the costs of congestion and emergency measures to households and businesses.

The Strategic Advantage of Community-Scale Power

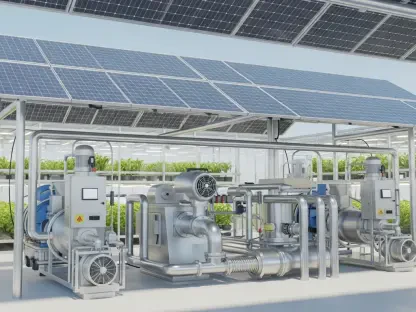

A more precise and effective solution is emerging in the form of community-scale distributed energy resources, typically ranging from 1 to 10 megawatts. These projects, which include localized solar arrays and battery storage systems, act as a middle ground between small residential rooftop units and massive, distant power plants. Because they are situated closer to the actual points of consumption, these assets can be placed strategically at the specific substations or circuits where the grid is most congested. This surgical approach allows for the relief of local stress points without the need for a total overhaul of the surrounding transmission network.

Speed is perhaps the most significant advantage of this distributed model, as these projects can move from the drawing board to full operation in as little as six to 18 months. This timeline matches the pace of modern industrial development, providing a “just-in-time” energy solution that traditional utilities cannot replicate. By utilizing existing distribution lines and placing generation directly where the growth is happening, developers can extract maximum utility from the current grid. This strategy not only solves the immediate capacity problem but also enhances the overall resilience of the system by creating a more modular and flexible power network.

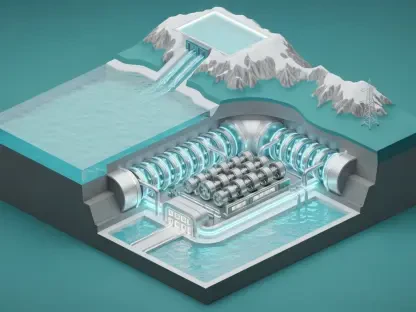

Economic Realities and the Case for Virtual Power Plants

The financial argument for moving toward distributed energy is supported by data from the Department of Energy, which suggests that the adoption of virtual power plants could save the American public $10 billion every year. These virtual plants are essentially networks of smaller, distributed resources that are orchestrated to act as a single, reliable power source during times of high demand. By aggregating the capacity of thousands of local batteries and solar installations, grid operators can achieve the same level of reliability as a traditional gas or coal plant at a significantly lower cost. This shift represents a move toward an “internet of energy” where intelligence and coordination replace the need for redundant, expensive physical hardware.

On a state level, the potential for savings is even more pronounced, as shown by detailed modeling in markets like Massachusetts. Research indicated that by optimizing just 1,800 megawatts of distributed storage, the state could save ratepayers over $2 billion by avoiding the most expensive peak-demand charges. If this localized approach were scaled nationally to cover just 20% of peak demand, the cumulative savings for American households could reach a staggering $170 billion. These figures demonstrate that distributed energy is not merely an environmental preference but a rigorous fiscal strategy designed to protect consumers from the soaring costs of a congested and outdated grid.

A Four-Pillar Framework for Reforming the American Energy Market

To successfully transition to this new energy paradigm, state regulators must implement a structured framework that prioritizes transparency and efficiency over bureaucratic routine. The first essential pillar is a legal requirement for utilities to share granular, real-time data regarding the health and capacity of their distribution networks. Without knowing exactly which substations are near their limit, developers cannot build the projects that provide the most grid relief. Secondly, states must adopt rigorous cost and risk benchmarking to ensure that the risks of project development are borne by private investors rather than the public, rewarding the most efficient and reliable projects.

The final two pillars of reform focus on standardization and oversight to remove the friction that currently plagues the energy market. Interconnection rules must be standardized across jurisdictions so that a project relieving grid stress is fast-tracked through the approval process rather than being buried under paperwork. Finally, there must be strong regulatory oversight to ensure that the immense cost savings generated by these distributed systems are actually reflected in the monthly bills of consumers. By creating a competitive and transparent environment, policymakers can unlock the private capital and innovation needed to build a grid that is fast enough to keep up with American industry.

In the final assessment, the transition toward a more distributed energy landscape became the defining challenge for the mid-2020s. Leaders recognized that the era of relying solely on massive, slow-moving infrastructure projects had reached its logical end as the demand for immediate power grew too intense. By shifting toward modular, community-scale assets and virtual power plants, the nation successfully began to modernize the grid from the bottom up. This evolution provided the necessary capacity to fuel the data center and manufacturing booms while simultaneously protecting the economic interests of the average ratepayer. Ultimately, the integration of these localized resources proved that the solution to a national energy crisis lay in the intelligent orchestration of community-level power.