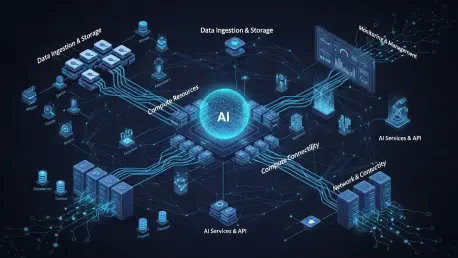

The rapid expansion of artificial intelligence infrastructure is currently colliding with the physical realities of the American energy grid and the expectations of local communities. As tech giants race to deploy massive data centers to power the AI boom, they face a growing structural misalignment between digital ambition and the slow-moving capacity of national power systems. This tension suggests that the future of technological leadership depends less on software innovation and more on securing a social license to operate within physical neighborhoods. The sheer velocity of this expansion has created a significant infrastructure lag, where data centers are built with the speed of software while the power grid remains stuck in an era of slow, predictable growth. Modern data center campuses require immense amounts of power—often between 100 and 300 MW—yet the upgrades needed to support them take years to complete. This timeline mismatch forces utilities to draw down safety buffers, effectively transferring the risk of power outages from wealthy tech firms to ordinary citizens who rely on the same grid for their basic needs and daily lives.

Navigating Technical Constraints and Fair Economics

Resolving the Grid Mismatch: Protecting Public Infrastructure

The technical burden placed on the electrical grid has rapidly evolved into a political liability as residents begin to view data centers as extractive enterprises that offer little back to the local community. When reliability buffers are stretched thin to accommodate hyperscale demand, the general public bears the brunt of potential failures, especially during extreme weather events that tax the system. To prevent this, experts suggest that the invisible transfer of risk must be stopped by ensuring that the speed of tech deployment does not compromise the stability of essential public utilities. This requires a shift in how grid capacity is allocated, moving away from a first-come, first-served model that favors fast-moving tech developers over long-term residential stability. Engineers are now advocating for more rigorous stress testing of local grids before any new large-scale interconnection is approved, ensuring that the existing population is not left vulnerable to brownouts or systemic instability just to satisfy the cooling and processing needs of a new AI cluster.

Furthermore, the chronological gap between the construction of a data center and the completion of necessary transmission lines creates a period of high vulnerability for the surrounding region. While a server farm can be erected and populated with hardware in less than two years, the regulatory and physical process of expanding high-voltage transmission often stretches from 2026 to 2031 or beyond. During this interval, the grid operator must often perform a delicate balancing act, prioritizing these massive new loads to avoid contractual penalties while hoping that no major equipment failure occurs elsewhere in the circuit. This precarious situation turns a purely engineering challenge into a source of public anxiety, as local governments realize that their energy security is being traded for corporate expansion. To restore trust, the industry must move toward a model where infrastructure upgrades are synchronized with facility construction, rather than treated as an afterthought to be handled by the utility company long after the servers have gone online.

Fiscal Responsibility: Defending the Principle of Beneficiary Pays

Beyond technical reliability, the financial impact on the average ratepayer has become a central point of contention in the AI infrastructure debate across the country. While large industrial customers historically helped lower costs for everyone by spreading fixed costs across a larger volume of sales, the sheer scale of current demand threatens to drive electricity prices up to cover massive capital expenditures. Protecting the public requires a strict beneficiary pays principle, ensuring that the corporations triggering these multi-billion dollar upgrades are the ones footing the bill, rather than captive residential consumers who have no choice in their provider. This means that if a utility needs to build a new substation or reinforce a hundred miles of transmission line specifically to serve a 500-MW data center campus, the cost of that specific project should not be socialized across the entire rate base of the state or region.

Implementing this economic protection requires a sophisticated regulatory framework that can distinguish between general grid modernization and the specific needs of hyperscale users. For instance, state utility commissions are increasingly exploring performance-based regulation that ties corporate incentives to the actual benefit provided to the local economy. Without these guardrails, there is a significant risk of stranded assets; if the AI market shifts or a specific facility becomes obsolete, the local ratepayers could be left paying off the debt for infrastructure that no longer serves a productive purpose. By requiring developers to provide upfront capital or long-term financial guarantees for specialized grid enhancements, regulators can insulate the public from the volatility of the tech sector. This approach ensures that the digital revolution pays its own way, transforming the perception of data centers from parasitic loads into responsible corporate citizens that invest in the physical foundations of the regions they occupy.

Addressing Environmental Impact and Local Resistance

Mitigation of Resource Scarcity: Addressing the Water and Land Crisis

The physical footprint of AI—specifically its massive consumption of water and land—has sparked organized resistance in once-friendly regions where resources were previously considered abundant. In areas like Texas and Oregon, competition for limited water resources used for cooling has moved from a theoretical concern to a local crisis that threatens agricultural and municipal supplies. Projects are increasingly being rejected not out of a fear of technology, but due to a rational assessment that minimal local job creation does not justify the resulting noise, grid strain, and resource depletion. For example, a single large-scale data center can consume hundreds of millions of gallons of water annually, often competing directly with local farmers or the drinking water needs of a growing town. This consumption is becoming harder to justify when the facility itself employs only a few dozen specialized technicians while occupying hundreds of acres of prime real estate.

To mitigate these conflicts, developers are being pushed to adopt more sustainable cooling technologies, such as closed-loop systems or air-cooled designs that significantly reduce their water intensity. However, these solutions often come with higher energy requirements or increased noise levels from massive fan arrays, creating a new set of problems for nearby residents. The transition away from the traditional decide-announce-defend model is essential here, as communities demand a seat at the table during the earliest stages of site selection. When developers are transparent about their resource needs and willing to invest in local water treatment or reclamation facilities as part of their project, the environmental friction can be reduced. Success in 2026 depends on whether these companies can prove they are not just consuming local resources, but are actively contributing to the long-term sustainability of the local ecosystem through innovative engineering and resource conservation.

Planning for Compatibility: Moving Beyond Development Friction

In places like Virginia, landmark court cases have halted massive developments, proving that historical and environmental concerns can successfully block billions in capital if the community feels unheard. The traditional approach of presenting a finished plan to a local board and expecting immediate approval is no longer functional in an environment where citizens are highly informed and organized. To move forward, the industry must adopt a collaborative approach that identifies high-compatibility zones where infrastructure can grow without infringing on fragile ecosystems or overextending local utilities. These zones are typically brownfield sites, former industrial areas, or land adjacent to existing heavy power infrastructure where the environmental impact is already managed. By steering development toward these areas, the industry can avoid the prolonged legal battles that currently delay projects and inflate costs.

Effective regional planning also involves a more nuanced understanding of the social fabric of a community, including the protection of historical landmarks and scenic vistas. When a data center is proposed near a national park or a site of cultural significance, the pushback is often intense and well-funded, leading to years of litigation that serves neither the developer nor the public. By engaging with local conservation groups and planning commissions early in the process, tech firms can design facilities that blend into the landscape or provide tangible community offsets, such as new public parks or the preservation of adjacent green spaces. This strategy moves the conversation from a zero-sum game of development versus preservation toward a more integrated vision of how digital infrastructure can coexist with the physical world. The goal is to create a scenario where the presence of a data center is seen as a stabilizing factor for a region rather than a disruption to its character.

Strategies for Regional Integration and Reciprocity

Modernizing Markets: Turning Data Centers into Grid Assets

A sustainable path forward requires treating data centers as sophisticated grid assets that can offer flexibility rather than just acting as passive consumers of power. By implementing market reforms, utilities can reward data centers for providing ancillary services, such as load-balancing and demand response using on-site energy storage or backup generation. This turns a potential burden into a tool for grid stability, ensuring that high-priority tech infrastructure contributes to the overall health of the electrical network during times of peak demand. For instance, a data center equipped with large-scale battery storage could feed power back into the grid during a heatwave, helping to prevent blackouts for residential neighborhoods. This shift requires a modernization of the regulatory language and market incentives that govern how large industrial users interact with the regional transmission organization.

Furthermore, the integration of on-site renewable energy and microgrid capabilities allows these facilities to operate independently during grid stress, reducing the overall pressure on the public system. When a data center generates its own solar or wind power and manages its own storage, it becomes a partner in the energy transition rather than a competitor for existing green resources. This level of technical sophistication allows regulators to view hyperscalers as potential stabilizers for a grid that is increasingly reliant on intermittent renewable sources. By formalizing these arrangements through grid-service contracts, the relationship between tech companies and utilities can be reimagined as a symbiotic partnership. This approach not only enhances the reliability of the AI infrastructure itself but also provides a measurable benefit to all users of the grid, making the case for public support much easier to sustain in the long run.

Radical Reciprocity: Enhancing Community Value Through Integration

The final pillar of maintaining public trust is radical economic reciprocity, which replaces simple charitable donations with meaningful community benefit agreements that create lasting value. This involves engineering infrastructure upgrades that improve the local grid for everyone and investing in specialized workforce development to create high-skilled local jobs for the existing population. For example, if a data center requires a new high-speed fiber optic line, that project could be expanded to provide broadband access to underserved rural areas in the same county. Similarly, by partnering with local community colleges to establish mechanical and electrical apprenticeship programs, hyperscalers can ensure that the economic benefits of the AI boom reach the people living in the facility’s shadow. This moves the industry away from the job-to-acreage deficit that currently fuels local resentment and provides a pathway to generational wealth for residents.

True reciprocity also means ensuring that the tax revenue generated by these massive investments is used transparently to improve the lives of local citizens. When a community can see a direct, tangible improvement in their own quality of life—such as better-funded schools, repaired roads, or a more resilient power supply—the digital buildout is finally viewed as a shared civic asset. This requires developers to move beyond performative public relations and engage in deep, long-term partnerships with local government officials. In the years from 2026 to 2030, the most successful AI infrastructure projects will be those that were built with the community, not just in it. By institutionalizing these benefits through legally binding agreements, tech companies can secure the long-term stability they need to operate while proving that the growth of artificial intelligence can be a rising tide that lifts all boats.

The path toward a sustainable digital future was paved by a fundamental shift in how technology leaders viewed their relationship with the physical world. It became clear that the technical hurdles of energy consumption and grid capacity were inseparable from the social requirements of transparency and fairness. To move forward, stakeholders must prioritize the implementation of regional planning maps that steer development toward high-compatibility zones while simultaneously mandating that all new large-scale loads contribute to grid stability through demand-response programs. Regulatory bodies should immediately adopt the beneficiary pays model to protect residential ratepayers from the costs of specialized infrastructure upgrades, ensuring that the economic burden remains with the entities driving the demand. Furthermore, developers are encouraged to formalize community benefit agreements that link facility approvals to local workforce training and broader infrastructure improvements. By treating AI infrastructure as a civic responsibility rather than a private enclave, the industry secured the public trust necessary to build the foundation of the modern era.