Christopher Hailstone stands as a cornerstone in the modern energy landscape, bringing decades of experience in navigating the complex intersection of grid reliability and renewable integration. As a leading utilities expert, he has spent his career dissecting the technical and financial mechanisms that keep the lights on during periods of unprecedented demand. His deep understanding of power generation portfolios, from legacy coal assets to cutting-edge nuclear uprates, allows him to provide a grounded perspective on the volatile market shifts currently reshaping the American power sector.

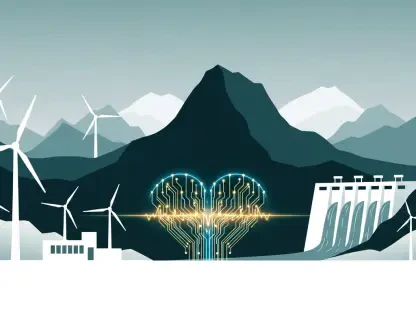

Today, we explore the strategic evolution of utility infrastructure, examining how major market players are balancing the massive energy requirements of the digital age with the physical realities of the grid. We will touch upon the shifting growth forecasts in key regions like Texas and PJM, the structural drivers behind significant power price spikes, and the nuanced role of energy storage in a market still heavily reliant on dispatchable gas and nuclear power.

Forecasts suggest annual load growth could hit 6% in Texas and 3% in the PJM region. How do these figures contrast with more aggressive market projections, and what specific physical constraints typically slow down the actual pace of infrastructure development in these territories?

While some third-party analysts and system operators have released much more aggressive projections, we believe that annual growth of 5% to 6% in ERCOT and 2% to 3% in PJM is a more grounded reality. There is often a wide disparity in views because many observers expect demand to compound at a rate that the physical world simply cannot support. We have to account for the grueling timelines of permitting, supply chain lead times for specialized equipment, and the sheer labor required for site preparation. It takes much longer to develop high-voltage infrastructure than the market often imagines, and these physical bottlenecks act as a natural brake on even the most ambitious expansion plans.

Tech giants are increasingly securing massive blocks of nuclear power through multi-decade agreements to support data center expansion. What are the primary technical hurdles when performing uprates on existing nuclear plants, and how do these partnerships influence the way generators plan for future capacity?

Performing uprates at facilities like the Perry and David-Besse plants in Ohio is a complex engineering feat that requires precision timing to increase a plant’s maximum power level. The technical hurdles involve modifying cooling systems, turbines, and secondary-side components to handle increased steam flow while maintaining the strictest safety margins. Partnerships like the 20-year agreement to provide over 2.1 GW of power to Meta provide the long-term financial certainty needed to greenlight these capital-intensive projects. When a “hyperscaler” commits to such a massive block of power, it allows us to plan capacity with a level of confidence that wasn’t possible in the era of shorter-term, purely merchant power sales.

Wholesale power prices in certain regional hubs have spiked by over 80% year-over-year. Beyond simple supply and demand, what underlying structural factors are driving this price volatility, and what steps are necessary to stabilize the market while maintaining a high volume of active construction projects?

The volatility we are seeing is staggering, particularly in the PJM Western Hub where average prices jumped from $53.91/MWh to $97.41/MWh in just one year. This 81% increase is driven by a structurally improved demand environment where load remains elevated even as older, less efficient units retire. To stabilize these markets, we need a regulatory environment that rewards the reliability of dispatchable assets while also allowing for the integration of the 4.5 GW of new capacity we currently have in process. Achieving stability requires a careful balance between incentivizing new construction and ensuring that the existing fleet remains economically viable through fair capacity payments.

Integrating over five gigawatts of gas-fired capacity through acquisitions is a significant move in the current energy landscape. What is the operational logic behind focusing on these assets, and how do coal-to-gas conversions specifically help bridge the gap between legacy systems and modern load requirements?

The acquisition of Cogentrix Energy and its 5.5-GW gas plant portfolio is a strategic move to ensure we have the dispatchable “spinning” reserves necessary to backstop a changing grid. Coal-to-gas conversions are essential because they allow us to repurpose existing interconnection points and infrastructure while significantly reducing the emissions profile of our fleet. These assets provide a vital bridge; they offer the high-torque, reliable power that data centers and heavy industry require during the transition to a cleaner energy mix. By focusing on these assets, we are essentially future-proofing our ability to meet the record capital expenditure spending we see from our large-load customers.

Energy storage often faces high costs and domestic sourcing challenges that impact its profitability compared to dispatchable energy. How do you evaluate the trade-offs between wholesale battery deployment and traditional gas assets, and what specific metrics determine whether a storage project is actually financially feasible?

When we look at the math, batteries often struggle to compete with traditional dispatchable options in terms of Internal Rate of Return (IRR) because their costs haven’t dropped as quickly as many predicted. In markets like ERCOT, relying on wholesale batteries to capture a “spark spread” or capacity payment has proven to be a very difficult proposition. Financial feasibility is determined by whether the storage is co-located to support a specific data center’s needs or if it qualifies for Investment Tax Credits, which can be challenging if the components aren’t domestic in origin. While we’ve seen success with legislative frameworks like the Coal to Solar & Energy Storage Act in Illinois, we generally find that gas assets currently provide a more reliable and cost-effective solution for large-scale grid stability.

What is your forecast for the future of grid reliability as hyperscale data centers continue to increase their energy consumption?

My forecast is that grid reliability will increasingly depend on the “behind-the-meter” integration of massive, dedicated power sources rather than relying solely on the open wholesale market. As hyperscalers continue their record spending, we will see a shift toward a more bifurcated grid where large-scale data campuses are directly tethered to nuclear and gas-fired assets to ensure 24/7 uptime. While this adds complexity to grid management, the addition of 4.5 GW of diversified capacity and a focus on realistic growth projections will be the key to preventing widespread instability. We are entering an era where the physical constraints of power generation will be the primary limiting factor for the growth of the digital economy, making long-term power purchase agreements the new gold standard for reliability.